story and photos by Mel Lambert

With a reported attendance in excess of 100,000 from some 160 countries, plus 1,800-plus exhibitors, the NAB Show is often described as the world’s largest convention encompassing the convergence of media, entertainment and technology – the self-styled “MET Effect.” Held April 7-12 at the Las Vegas Convention Center, the NAB Show has become an important venue for post-production solutions that address the complexity of content delivery across multiple platforms, from creation to consumption.

As NAB president/CEO Gordon Smith stated in his keynote address, “The FCC has approved voluntary deployment of the NextGen TV standard [also referred to as ATSC 3.0], which promises to deliver the benefits of ultra-high-definition television, interactive features and customizable content.” Smith also stressed that NextGen TV, with its capabilities of delivering HDR, 4K resolution and immersive audio, “promises to enhance viewers’ access to the content they seek.” NAB recently partnered with Capitol Broadcasting Company and NBCUniversal to present the Winter Olympic Games from South Korea using NextGen TV, on an experimental broadcast channel in Raleigh, North Carolina.

“[Audiences] experienced stunning ultra-high-definition video, the first ever live-over-the-air use of Dolby’s immersive audio system, as well as interactive applications,” he continued. Two test-market initiatives for NextGen TV in Phoenix and Dallas currently are underway and plan to be on-air later this year, creating a demand for enhanced content.

As on previous occasions, a two-day summit entitled “Future of Cinema,” co-organized by SMPTE and NAB, addressed the challenge of implementing new technologies for crafting and delivering emergent exhibition formats. Chaired by Chris Witham, director of emerging technology at Walt Disney Pictures, an early session addressed “Getting Ready for Next-Generation Cinema,” including direct-view displays and holographic-like imaging from light-field displays, while “The Future in High Dynamic Range – Are You Ready?” discussed creative options offered by HDR for cinematography, video editing and post-production, including workflows, content and human perception.

As Bill Baggelaar, senior vice president of technology, production and post-production at Sony Pictures Entertainment, pointed out, “Consumers have a preference for HDR,” with EDR (Enhanced Dynamic Range) being “a good first step.” He also stated that while penetration of HDR monitors into the home is still in its early stages – 4K resolution is still a stronger selling point – HDR-enabled TVs are being pursued aggressively by the consumer-electronics industry.

But HDR reference monitors remain a cost bottleneck for content generation and mastering, according to Tyler Pruitt from Portrait Displays. Costs in excess of $25,000 “are too expensive for smaller editorial suites working on unscripted content,” he said. High-end screens are available from Canon, Dolby, EIZO and Sony, in addition to TVLogic and Flanders Scientific. Until they were discontinued, plasma displays served as client monitors (because of black levels and viewing angles) with a look that matched conventional CRTs. Nowadays, OLED TV displays are being used for SDR projects as a cost-effective match to reference monitors. Matching to a reference monitor for HDR is more problematic, Pruit advised, with grading and editorial suites resorting to manual adjustment of displays to matches a reference, with custom LUTs then being used against an alternative white point.

A summit panel entitled “Beyond Cinema – The Emergence of Location Based Entertainment” focused on virtual reality and haptics, and the development of VR-based entertainment experiences for young audiences in urban environments. These range from highly interactive gaming to immersive storytelling and environmental experiences. In essence, they blur the line between physical and digital reality and possibly replace traditional cinemas as an entertainment option.

The conference keynote was delivered by Christopher Buchanan, Samsung Electronics America’s director of business development, who stressed that cinemas are in the process of transforming from movie houses into “immersive entertainment experiences, attracting younger generations while still delivering quality programs that appeal to long-time audiences.” Samsung continues to develop technologies “that deepen the cinematic experience,” he said. While exhibitors needs to raise their game against increased competition from streaming services, Buchanan maintained, “Event cinema is about the experience consumers cannot get at home, including IMAX, 4DX and virtual reality.”

“I’m looking forward to seeing Douglas Trumbull’s Magi Pod,” he confessed, referring an in-process immersive cinema format that might challenge IMAX and Dolby Cinema. Seating between 60 to 70 people in an environment that could compete with premium large-format/PLF offerings at lower costs, Magi Pod uses a Christie laser projector, 32-channel Christie Vive loudspeakers and a partial-spherical curved screen. Buchanan revealed that Samsung is set to launch a modular LED video wall to replace traditional cinema projectors and predicted that within 10 years, theatres will feature holograms, light-field technology and volumetric haptics, enabling audiences to “touch and feel a 3D image.” However, people may have different requirements and needs, and exploring the domain might be beneficial. So, if somebody wants something specific like 4K projectors for gaming or big screens for streaming, then looking for pages, blogs, and websites that provide information for that exact niche could be helpful.

On April 20, Samsung unveiled its 4K LED cinema screen at Arclight/Pacific Theatres in Chatsworth, California. Described as the first Digital Cinema Initiatives-compliant HDR LED display, with an audio solution from JBL Professional, it is said to offer the “versatility and premium AV environment necessary to redefine the theatre experience, extend usage opportunities and wow even the most entertainment-savvy consumers.”

High dynamic range and high frame rates will transform the cinematic experience, Buchanan predicted, requiring new opportunities for movie-making and media consumption. During his time at Amazon, he contributed to an original content initiative that became Amazon Studios. As part of its content acquisition team, he also licensed film and TV programming for Amazon Video on Demand.

A session entitled “Innovation in the Cloud: Building Comprehensive Media Solutions,” co-organized by USC’s Entertainment Technology Center and Google Cloud and moderated by Seth Levenson, ETC’s director of adaptive production, focused on how media companies can leverage cloud-based solutions to more efficiently scale and build for the future, including content creation and innovations in end-user experiences. Jeff Kember, Google Cloud’s technical director from the office of the CTO, stressed the importance of collaborating with content creation and audiences. “We have products and services to connect the two, providing Tier 1 content on our cloud from the studios,” he said, addingthat Google is now a partner for ecosystem delivery, with known costs per petabytes for cloud-transfer mechanisms and virtualized supply chains.

A related session from the “Next-Generation Media Technologies” program addressed the emergent themes of machine learning, deep learning and artificial intelligence technologies, and the ways in which studios, networks and creative service companies use them to produce content. Moderated by Kylee Peña, coordinator of production technologies, imaging and sound at Netflix, “Machine Intelligence: From Dailies to Master” focused on how AI-based technologies can boost productivity, efficiencies and creativity in production planning, animation, VFX effects, editorial and post.

As the audience discovered, AI and MI apps have long-term potential to alter creativity and workflow. Adrian Graham, Google’s cloud solutions architect, media and entertainment, outlined the company’s remarkable TensorFlow technology, which can be used to determine the content of image and video files for advanced library search and process implementation. Weyron Henriques, vice president of product development at Deluxe Technology, explained that TensorFlow technology is a key element of its recently launched Deluxe One offering, using Synapse for content creation and specific pathways, from dailies – or even on-set – to let editors quickly locate shots and assemble a playlist.

Finally, a practical workshop within the Post | Production World program entitled “Documentary Editing,” delivered by Christine Steele from Steele Pictures Studios and Kelley Slagle from Cavegirl Productions, provided an in-depth analysis of managing footage using software- and web-based tools, editing workflows, metadata and transcription options, media organization and collaboration tools. Steele demonstrated how she uses story treatments to guide the edit and effectively craft sequences that support a documentary theme – basically a three-act format, with a beginning, middle, and an end – while Slagle addressed legal, copyright and IP issues encountered during a complex edit. Using real-world examples, the duo examined different approaches to documentary story editing that can be applied to non-fiction films, while clarifying technical topics and practical methods to manage media while editing a film using Adobe Premiere Pro and other NLEs.

New Product Unveilings

Turning to new products unveiled at the NAB Show, there were key developments in audio workstations, nonlinear editors, mixing systems and immersive monitoring solutions, with Adobe, Avid and Blackmagic demonstrating enhancements for NLE platforms.

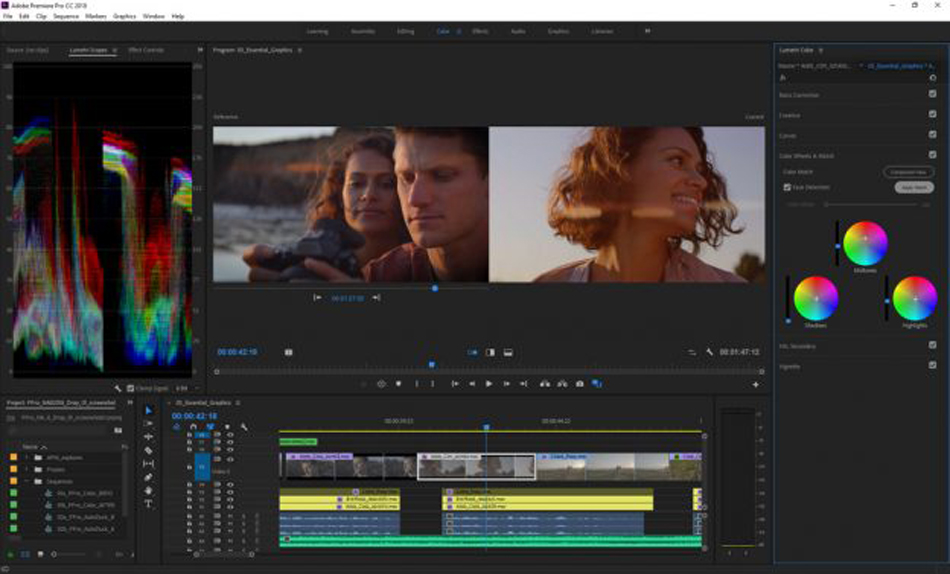

Adobe showcased new features for its Creative Cloud app, including Premiere Pro CC and After Effects CC to provide a connected ecosystem with Adobe Stock. Lumetri tools in Premiere Pro, including new Color Match, allow shots to be matched with a single click, applying editable color corrections from one clip to another to establish visual consistency in scenes, with intelligent adjustment for skin tones. Curated HD and 4K footage and motion graphics templates from Adobe Stock can now be auditioned and added within Premiere Pro, while unlimited variations of a single After Effects composition can be managed and added directly to projects. Soundtrack audio can be auto-ducked around dialog within Premiere Pro, with projects capable of being opened directly within Audition CC.

The new CC release introduces features that automate color, graphics and audio tasks, including shot comparison with color matching, with face detection that intelligently adjusts for skin tones in the target image. Also, a split-view option enables comparison of two shots side-by-side or via a wipe slider to see before-and-after color adjustments, while a video Limiter ensures that color grading meets broadcast standards. Premiere Pro adds new format support for camera RAW Sony X-OCN (VENICE), Canon Cinema RAW Light (C200) and RED IPP2.

Avid unveiled updates to the Pro Tools audio-editing platform, which is now available in three flavors: free-of-charge Pro Tools | First, which allows first-time users to compose, record, mix and collaborate in the cloud; Pro Tools, which offers key features for audio and MIDI-based projects; and Pro Tools | Ultimate (previously Pro Tools | HD), which offers a comprehensive post-production toolset, and now includes the Avid Complete Plug-in Bundle as well as Pro Tools | Machine Control, in addition to cloud collaboration enhancements and immersive mixing with Dolby Atmos.

A new subscription version of Avid Media Composer starts at under $20 a month, with up to 24 video tracks, 64 audio tracks and unlimited bins, together with a newly refined user interface and support for 4K, 8K and HDR. The NLE family consists of Media Composer | First for picture editors just beginning their journey; Media Composer; and Media Composer | Ultimate, which offers collaborative functions and shared storage, together with ScriptSync and PhraseFind options. Also available: integrated post workflows centered on MediaCentral | Editorial Management that offer collaborative asset management for Media Composer with Artist | DNxID video I/O hardware and NEXIS software-defined storage.

The Avid Main Stage featured live presentations by post teams behind a number of award-winning movies and TV shows, including a presentation by sound designer and supervising sound editor Douglas Murray, who showed several sound clips from War for the Planet of the Apes. Other featured material came from Game of Thrones, Stranger Things and Westworld.

Blackmagic Design has added a number of new features and capabilities to its DaVinci Resolve editing/grading application, including Fairlight audio post-production and Fusion visual effects, the latter with over 250 tools for compositing, paint, particles and animated titles. V15’s four high-end applications are accessed as different pages for both offline and online editing, color-correction tools, audio post and motion graphics. A collaborative workflow is said to speed up post-production because users no longer need to import, export or translate projects between different applications, while work no longer needs to be conformed after changes have been made. A free version of DaVinci Resolve 15 is available, together with Resolve 15 Studio, which costs $299 and adds multi-user collaboration, 3D, VR, additional filters and effects, unlimited network rendering plus temporal and spatial noise reduction.

As well as incorporating Fusion with Resolve 15, Blackmagic has also added support for Apple Metal, multiple GPUs and CUDA acceleration, for speedier operation; compositions created in the stand-alone version of Fusion can also be copied and pasted into Resolve 15 projects. The Fairlight audio page now offers an ADR toolset, static and variable audio re-timing with pitch correction, audio normalization, 3D panners, audio and video scrollers, a fixed playhead with scrolling timeline, shared sound libraries, support for legacy Fairlight projects, and built-in cross-platform plug-ins that include reverb, hum removal, vocal channel and de-esser. A new LUT browser is said to allow fast previewing and application, along with new shared nodes that are linked.

Turning to plug-ins, Boris FX unveiled new versions of Continuum, Sapphire and Mocha, together with a new App Manager for managing and licensing. Particle Illusion, the motion graphics and particle animation system the firm acquired from GenArts, is now available with an updated user interface, new emitter libraries and faster GPU speeds. Mocha Pro, Mocha VR, Sapphire and Continuum tools now feature a new Essential Interface Mode to help editors and visual effects artists handle tracking and masking challenges; Mocha Pro and Mocha VR include an improved interface, new tools for rotoscoping and mask creation and speed improvements in object removal and clean plating. GPU improvements on Avid and Resolve platforms are said to offer faster effects rendering and playback, together with output of preview images and video to broadcast monitors via Blackmagic and AJA hardware.

Nugen Audio showcased a new extension for Halo Downmix, an application that offers down mixing of feature-film and 5.1 mixes to stereo, together with updates for its Halo line, a new Dolby E extension module for the AMB Audio Management Batch Processor, and loudness plug-ins. The Halo Downmix extension is said to deliver accurate down mixes that are not limited to typical in-the-box, co-efficient-based processes, with full adjustment and visual controls for relative levels, timing and direct versus ambient sound balance. The optional 3D Immersive Extension adds down mixing of 7.1.2 Dolby Atmos bed tracks to 7.1, 5.1 and stereo formats; all versions of Halo Downmix now offer improved control over center-channel energy placement for channel counts above stereo.

In addition to demonstrations of Atmos Immersive Surround Sound for cinema and consumer delivery, Dolby Laboratories showed Atmos sound playback via headphones and earbuds on Samsung smartphones, including the new Galaxy S9 and Galaxy S9+ models. “In addition to the theatre and home, Dolby Atmos transforms the way users experience audio on mobile devices, enabling immersive experiences on the go,” said Giles Baker, senior vice president of the company’s consumer entertainment group. Dolby Atmos for mobile devices is said to bring an immersive sound experience, first used in cinema and increasingly available on TVs and home entertainment devices, to smartphones and tablets, with a more enveloping soundfield, greater subtlety and nuance, crisper dialogue, maximized loudness without distortion, and consistent playback volume.

Dolby Atmos Mastering and Production suites also have been enhanced, with the former now a cross-platform Mac- and PC-native application offering ASIO, Core Audio and Dante support. A new user interface is said to simplify user functions from configuration through metering and monitoring to final mastering. A Renderer Remote application and binaural rendering modes for headphone monitoring are said to improve mix efficiency. Down mixing workflows also are improved, with trim and warp controls, plus the ability to change metadata after-the-fact in a recorded master, thereby simplifying 5.1 and 7.1 renders. Production Suite updates include Dolby Audio Bridge and Core audio support for non-Pro Tools users; Steinberg Nuendo panning is supported via a VST Multi-Panner.

Also to be seen: use of Apple’s new ProRes RAW codec by several manufactures, including Sony PXW-FS5, Panasonic AU-EVA1, Canon C300 Mark II and C500 cameras, Apple Final Cut Pro X NLE and the Atomos Shogun Inferno and Sumo monitor/recorders. Available in two 12-bit RGB data variants – ProRes RAW and ProRes RAW HQ – the new codec produces RAW video capabilities for file sizes similar to ProRes 422HQ and ProRes 4444, respectively, and is said to be optimized for 4K HDR productions.

In addition to showing new wares – including the Model 8341A and 8331A three-way coaxial monitors from The Ones Series, and the Model 1032C two-way nearfield monitor with Smart Active Monitoring – Genelec presented a technical paper entitled “Immersive Audio Monitoring for Drama, Games and VR” during which the firm’s senior technologist, Thomas Lund, described Dolby Atmos, Ambisonics and binaural formats for feature and drama production. Highlighting the differences between in-room monitoring and those needed for binaural playback, Lund pointed out that “head movement, spectral balance, calibrated listening level, SPL requirements and the prevention of production-side listener fatigue” can affect consistency, the end-listener experience and inter-subject variability. “A major practical difference between the various application areas is the defined and much higher volume experienced in the cinema,” he said.

Lund believes that binaural reproduction while viewing visuals on a smartphone or VR headset is likely to become a “credible delivery possibility,” bearing in mind the need for precise, individual head transfer functions to take into account head movement. “However, in-room monitoring ensures immersive qualities and translation across a variety of playback conditions, including different binaural rendering devices,” he stressed. The individual features of a listener’s outer ear and body movement affect how he or she hears, within both a natural environment and in rooms set up with audio playback and monitoring equipment.

Glyph unveiled the 4TB Atom RAID SSD portable drive with fast transfer speeds for backing up, storing and transporting video and sound clips. Fully compatible with Mac OS X and Windows platforms, the drive features the latest USB-C connection and is backward compatible with USB 3.0 and Thunderbolt 3 at transfer speeds reaching 860 MB per second. Each unit features two RAID-0 SSDs.

AJA showed the new IPR-10G-HDMI Mini-Converter, which converts SMPTE ST 2110 IP video/audio to HDMI format to allow post and AV professionals to output ST 2110 to suitable monitors. The company also introduced ST 2110 support for its KONA IP via the new free-of-charge Desktop Software v14.2. “SMPTE ST 2110 is positioned to drive forward IP video adoption,” said Nick Rashby, company president. “IPR-10G-HDMI and KONA IP, with SMPTE 2110 support, allow facilities to take advantage of uncompressed HD video and audio over IP, while getting the most out of the gear they already have in edit bays, control rooms and other environments.” Also to be seen: a V2.5 firmware with new enhancements for the FS-HDR real-time HDR and Wide Color Gamut/WCG converter/frame synchronizer with Colorfront Engine processing.

Black Box demonstrated a range of keyboard-video-mouse/KVM systems for server rooms, edit and remix suites that provide real-time access to any platform from any user station, with support for end-to-end content acquisition and playout. The products are said to ensure distribution of reliable digital image quality and, when required, fast switch-over of redundant hardware. IP-based KVM provides access over a wide-area network to post-production video libraries with managed receiver and transmitter extenders, together with shared content access between edit, dub and presentation suites.

ZOO Digital‘s cloud-dubbing service serves as an on-line dubbing studio for voice artists and directors, addressing a wide range of challenges with the way such a process has traditionally been managed. It is said to offer content owners greater visibility of the production process, plus access to a wider choice of voice talent and the flexibility to work remotely. Using a combination of traditional dubbing studios and controlled recording environments, material can also be recorded in-territory in all key dubbing languages.

“Audiences experienced stunning ultra-high-definition video, the first ever live-over-the-air use of Dolby’s immersive audio system, as well as interactive applications.” – NAB president/CEO Gordon Smith

Promise Technology, a storage solutions provider for the music and effects market, launched the Atlas series of NAS shared storage systems, and the new SANLink3 N1, a bus-powered Thunderbolt 3 to NBASE-T Ethernet adapter. The Atlas series features connectivity over Thunderbolt 3 and 10 Gbit Ethernet; Atlas S8+ is an eight-bay desktop and Atlas S12+ a 12-bay rackmount unit, with a simple-to-use GUI. SANLink3 N1 allows Thunderbolt 3 devices to be added to an existing Ethernet infrastructure at up to 10 Gbit speeds for high-demand workflows.

The new Minnetonka AudioTools Server now includes a WorkflowEditor and APTO v4 that manages the loudness of real-time audio streams or file-based assets. WorkflowEditor lets users visualize, edit and control parameters via an easy-to-use dashboard to view the path that files will follow as they move through the created workflows. A new feature allows users to automate regular, time-consuming tasks and decrease turnaround time. It also includes Linear Acoustic’s APTO adaptive loudness control solution for real-time audio streams or file-based assets. With expanded real-time control profiles, V4 maintains regulatory compliance for broadcast, streaming and mobile applications, in audio formats up to 7.1-channel.