by Paul Petschek

When do we make an edit? Where do we make an edit? Increasingly in this post-MTV age of cinema, the question arises: How often do we edit? When I’m lecturing at the Directors Guild, teaching editing workshops for the intensive program at Video Symphony, or teaching Avid-certified courses at Moviola Digital, I find the answer is increasingly: “Edit often.” Students can show observable anxiety if footage lasts more than a second before another cut is made.

Producers, directors and editors may fall prey to a dictate that shorter is always better, even though, as stated excellently in the article “The Magnificent Seven” (CineMontage, MAY-JUN 09) by Angus Wall, co-editor on The Curious Case of Benjamin Button, “Ironically, sometimes it can make the film feel longer if things are faster… Sometimes if a scene is allowed to breathe a little bit, it actually plays a little quicker.”

This essay was inspired over eggs and coffee on a wintry morning on the Sunset Strip, when I got a chance to tell Sam Pollard, well-known editor, director, producer and NYU professor (who recently completed a documentary on President Obama, which will show on HBO in November), about my theory of “frequency.” While students often believe that frequency is created simply by how quickly we edit, there are so many more ways to discover and use frequency, as I will explain. Sam was intrigued.

In a simplistic way, film presents a series of bits of meaningful information, intended to evoke an emotional response in the viewer. We can look to the study of linguistics for a parallel concept. For linguists, “the flow of information” results in “discourse.” In examining discourse, linguists track the flow of “referential” or meaningful information. Frequency, as I am using it here, could be defined as “tracking how often meaningful information occurs in a film sequence.” I am including both images and sounds as meaningful information. Frequency is an appealing concept to me because of its multiple connotations. The concept is commonly used in reference to sound frequencies for equalization and for “pitch.” It can also be used in a different way, referring to “tempo.” I am proposing here to look at frequency as a measurement for visual material, as well as for sound, to assist us in knowing where to cut, why to cut and how often to cut. It will also help us with sound design and visual effects.

One of the first places I noticed the impact of frequency in great editing was the famous battle scene in Apocalypse Now, where Robert Duvall’s Colonel Kilgore and his 1/9 Air Cavalry attack a Vietnamese village to the powerful strains of Wagner’s Ride of the Valkyries. I had always thought this was a brilliant and pretty high-action scene. I was curious to see whether this relatively older film employed anything like “quick-cutting,” so I counted the length of the various shots, and found a fairly consistent pattern of two seconds per shot. Pretty quick. But it was in the longer shots that the principle of “frequency” really asserted itself.

(I suggest that you cue this scene before or after reading this. It’s a great watch and the principle becomes so much clearer upon examining these shots.)

One of the first instances of a longer cut is a ten-second wide shot of helicopters moving past each other, criss-crossing in two-dimensional and three-dimensional space. Even though the camera motion itself is not intense, the simple act of the shapes crossing each other, frame by frame, can be seen to increase the frequency of the shot. As every frame presents a different picture, spatially unique from the next, the human eye is kept very busy, and the frequency of interest is greatly increased. All tracking shots can be shown to share just such a trait, no matter how kinetic.

Simple shapes have a frequency, and a circle would have a different frequency than a square. If the shapes turn in three dimensions, that could result in a higher frequency than moving in two. Great acting has more frequency than bland acting, for there is more going on in each frame. For instance, in the first scene of The Godfather, where you have Marlon Brando playing with a cat while very much being the Godfather, you simply don’t want to cut into it because the frequency of interesting bits is so high.

Even the relatively static shots in the Apocalypse Now sequence have some motion, and whenever motion occurs in a scene––whether it’s a head-turn, an eye-blink or foot-tapping––this beats out a rhythm of meaningful events. Not only does each movement create a rhythm, but it sets up a frequency. In effect, each new event restarts the timing clock, so that the shot can possibly hold longer. And we all are aware of the strong presentiment to “cut on action,” basically to cut on the edges of frequency. Visual details also contribute to the frequency of the shots in Apocalypse Now. In a wide shot, there is the oscillation of every single helicopter blade. A billowy cloud layer surrounds the helicopters. An empty, blue sky would have so much less detail and interest.

When the camera finally pans past the Vietnamese village, one can see how the frequency accelerates throughout the shot. At first, it is low. There is relatively little village activity. The camera tracks right, slowly. Then, more figures start to appear in a distant doorway. As villagers start running towards us, their sounds add to the count, and the camera also appears to speed up. Visually, the oncoming moving arms and legs of the villagers increase the frequency. Someone rushes past camera left, moving on a quick diagonal towards the others, shouting. The camera moves past fencepost after fencepost in the foreground, as each post beats a rhythm or frequency of its own.

Finally, there is a cut to a close-up. Certainly the cut here adds a frequency, and so does the change of angle. The closer shot increases the intensity with which people move through the scene, and the sense of tension has palpably increased. We cut to a telephoto shot of the helicopters—almost a static shot in its timeless composition, where we see layers of sea and sky with helicopters sandwiched in between. The calm before the storm…

The rest of the scene is a master class of just such aesthetic principles in action. When guns fire, you have the frequency of the visual flares and the sounds. There is a shot of farmers and fighters running along a bridge. Every footstep beats out a rhythm. Meanwhile, the camera is twisting and turning, creating a new image with every frame. This high-frequency moment draws on all these elements, yet has somewhat the feeling of “homespun” because the footsteps on the bridge create much of the frequency.

Now let’s look at a more recent film, The Matrix. We can see examples of frequency here that are somewhat counter-intuitive. Consider the famous lobby shooting-spree scene. I believe most audiences would experience this scene as “high frequency,” despite the fact that most of the scene is in slow motion! How can this be? First of all, the music is admittedly high frequency––it moves. And there are some quick martial arts moves. A number of editing techniques contribute to frequency in the scene: quick-cutting, jump-cuts, “crossing the line” and “kinetic cutting,” where the abrupt movement of the camera supplies the frequency.

But how does slo-mo fit into this high-frequency moment? Notice that with slo-mo we actually get to see every tiny little particle of debris that is being caused by the gunfire. Each particle floats outwards as things literally explode in space. Each and every particle is a fascinating visual bit that adds frequency to this image. If we extrapolate out from this notion, texture of any kind in an image adds frequency as well. A smooth surface has less frequency. On an action level, when the gun cartridges fall to the floor in slow motion, with slightly syncopated sound effect, the fact that we can reference each and every one of them creates a higher sense of frequency, not lower. If they fell in real time, they would fall with a kind of non-distinct thud. Their falling would become one singular event, not many.

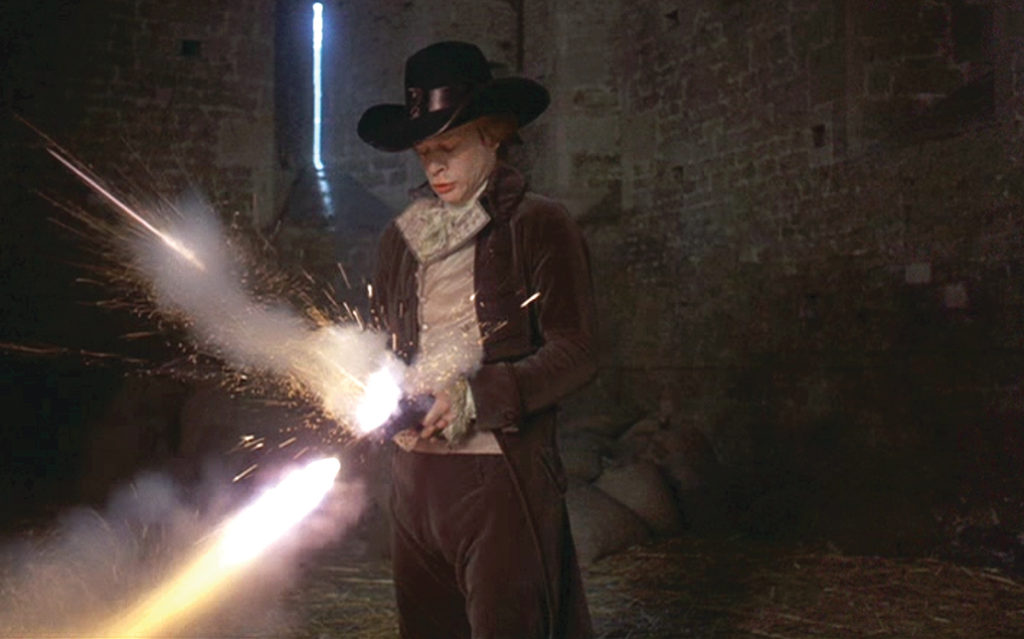

In my editing classes, I find it instructive to contrast the use of frequency elements in another classic fight scene––the final duel in the 1975 classic Barry Lyndon. This entire movie is set at what many audiences find a slow pace, certainly by modern standards. After all, the film is trying to re-create life in the 18th century!

What creates the necessary visual and dramatic interest in these scenes? So many have made note of the extraordinary cinematography and the use of natural lighting, but how many make note of the frequency? The craftsmanship in buildings and furnishings and costumes provide meaningful references—the presence of this aesthetic principle. That is why, with John Alcott’s brilliant cinematography, the detail in the clouds, trees and costumes are worth volumes of a more modernistic treatment.

In the final duel, what is intriguing from a frequency point of view is how exquisitely the filmmakers separate all the actions of the fight. Not only is this in keeping with the traditions of the day, but separating all the actions creates a greater number of separate moments of meaning. It can’t be overestimated how important this is in storytelling. The scene is well worth watching for this exquisite separation of all moments, including the stepson’s nausea, the onlookers’ disdain for his weakness, his need to take a break from the duel… I’ll let those who haven’t seen it reference the scene.

What is also interesting in terms of frequency are more subtle aspects of sounds and images. The cooing sound of the doves dramatically increases the frequency, and thus the action-sense and tension of the scene (very high frequency, that dove cooing). A coin is tossed and examined on the ground as to whether it is heads or tails. The particles of hay blowing across the etched face of the coin increase frequency, compared to how it might be if shot with a perfectly clear coin face (in the hands of a lesser director and editor). Even the light streaming through narrow slats of windows in the barn adds to the frequency of the scene, as the shards of light reflect dust subtly twisting in the blue haze.

If so many elements of image and sound add to the frequency of a scene, how do we use this to answer the questions of when to edit, where to edit, and how often?

Cutting on meaningful and referential occurrences is one of our first trademarks. It results in the familiar practice of “cutting on action.” But one could say, “Cut on meaningful activity” or “Cut so as to frame meaningful activity.” It leads us to possibly want to always create the right frequency, through varying the amounts of picture and sound, to deliver to the body of the audience just the right emotion.

In order to properly evaluate this correct frequency, we can pay attention to all our senses and to the results of frequency created through all these methods––whether it be frequency through movement in the frame, frequency created through sound, frequency created through camera movement, frequency created through visual detail, or frequency created through the tempo of cutting itself.

Editors are the arbiters of time and space in the movies. What we create flows through our fingertips, as we establish just the right rhythms and just the right frequencies to move the audience, even to change lives.

Paul Petschek is a freelance editor and teaches editing workshops and Avid-certified courses at Video Symphony TV and Film School and Moviola Education Center. He can be reached at filmctr@aol.com.